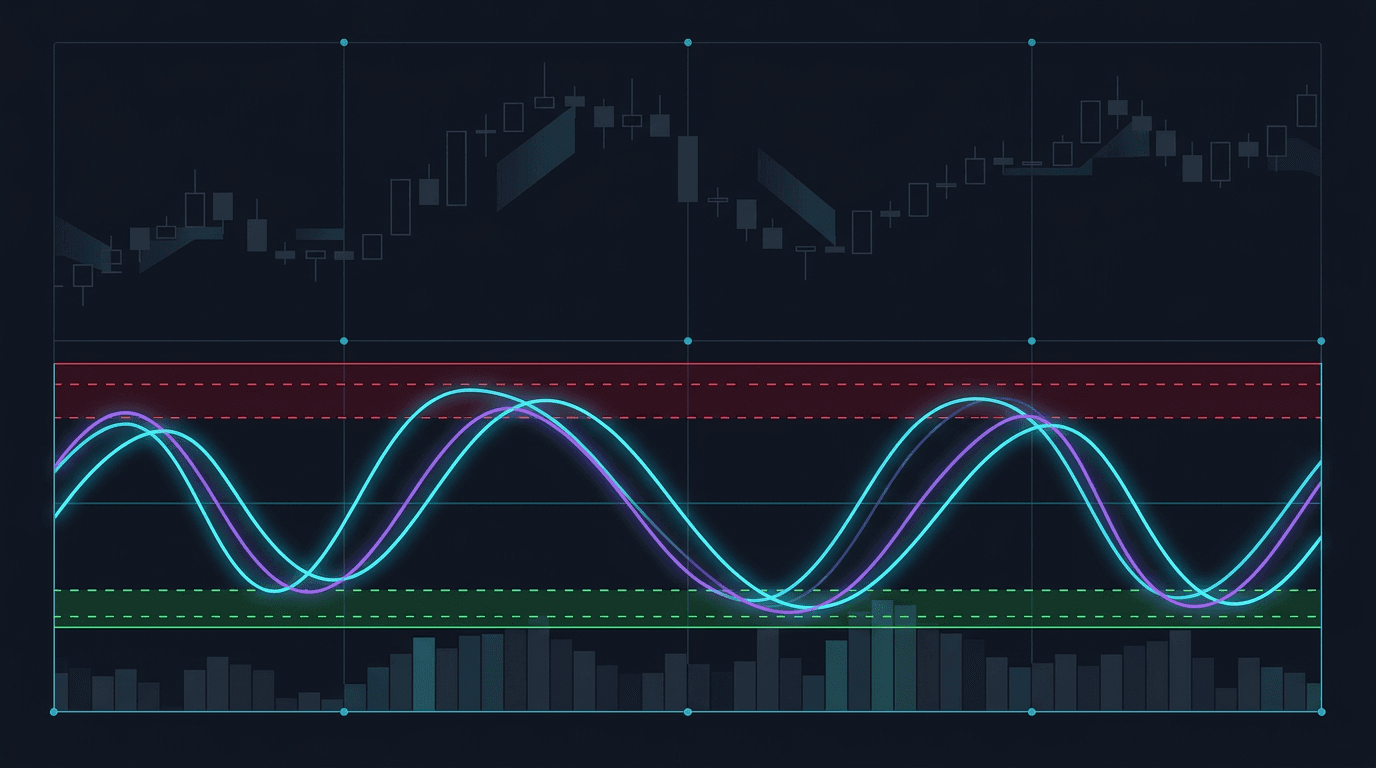

Build a WaveTrend Oscillator in Pine Script v6

Step-by-step guide to coding the WaveTrend oscillator from scratch — smoothing math, OB/OS bands, cross signals, histogram, and divergence alerts.

Master algorithmic trading with expert guides on Pine Script, MQL5, and proven strategies. From beginner to advanced.

Category

Difficulty

36 articles found

Step-by-step guide to coding the WaveTrend oscillator from scratch — smoothing math, OB/OS bands, cross signals, histogram, and divergence a...

Master ICT premium/discount zones and Optimal Trade Entry (0.62–0.79 Fib), then build a Pine Script v6 indicator that auto-plots every level...

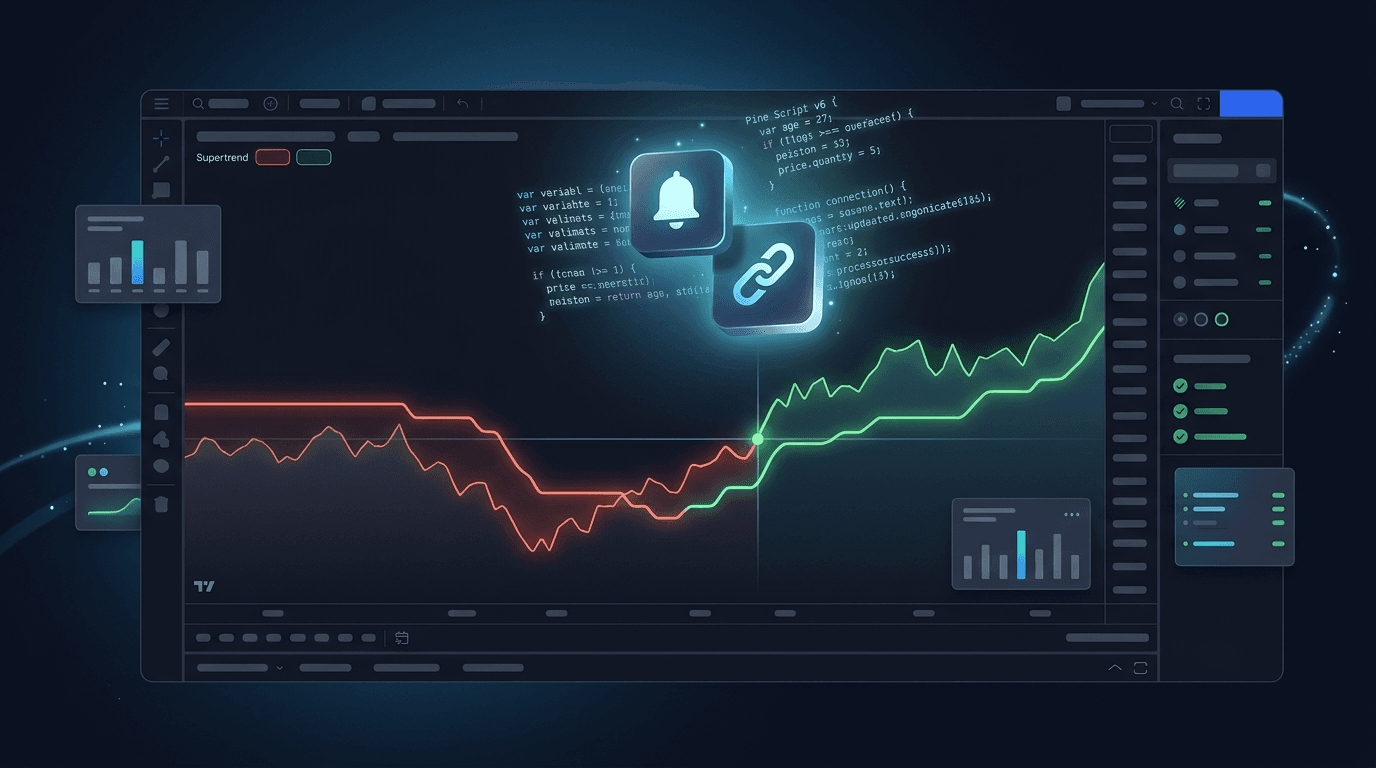

Build a fully automated Supertrend strategy in Pine Script v6 — entries, exits, ATR trailing stops, EMA filters, and webhook alerts for brok...

Master the simple moving average crossover strategy with exact parameters, Pine Script v6 code, RSI/MACD filters, and backtested rules that ...

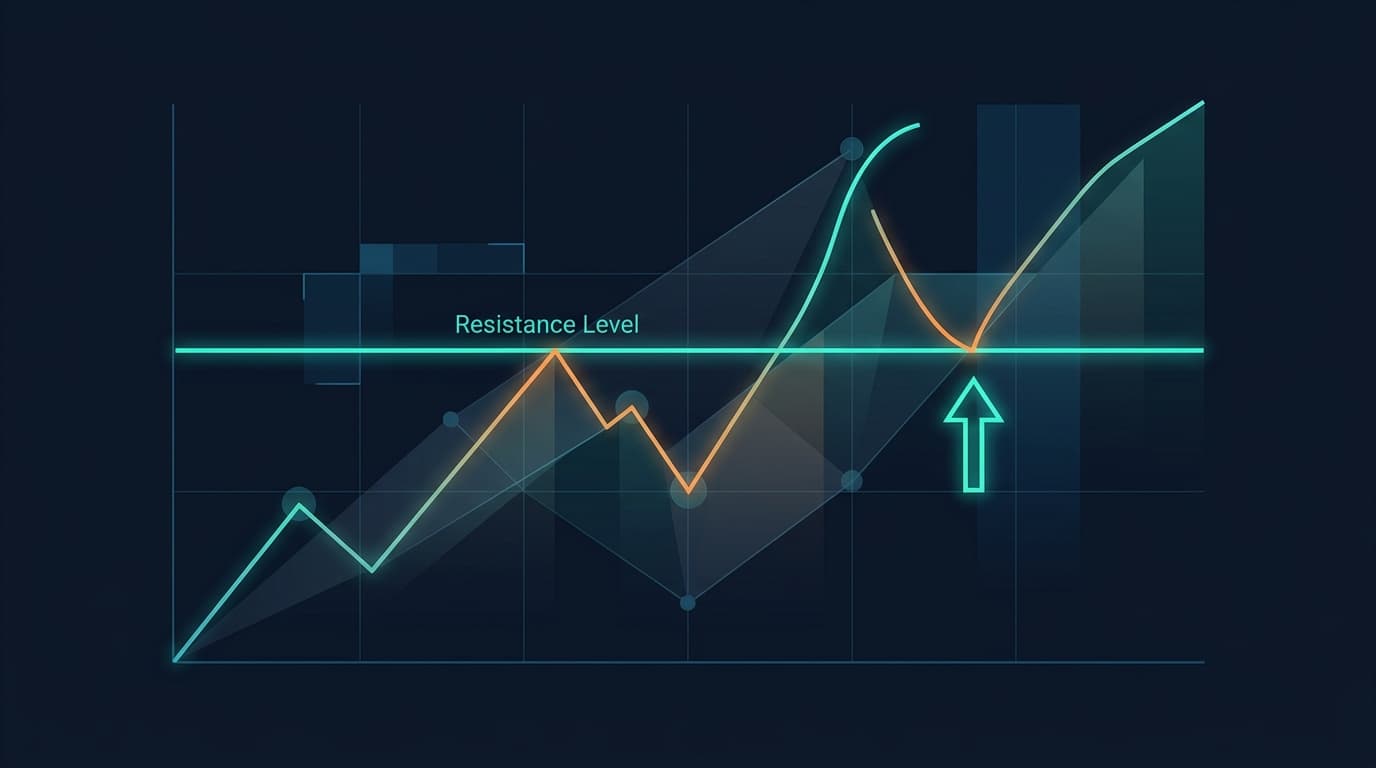

Master the break and retest strategy with exact entry rules, Pine Script code, and pro tips for swing trading stocks, forex, and crypto.

Learn how to build your first Metatrader 5 indicator without writing a single line of code. This beginner-friendly guide shows you how to us...

Learn how liquidity sweeps work and why smart money hunts stop losses before major moves. Includes identification techniques, trading strate...

Compare Pine Script and MQL5 to decide which trading language to learn first. We cover differences, use cases, difficulty, and help you choo...

Learn proven Bollinger Bands strategies for day trading. Includes setups, entry rules, backtesting tips, and Pine Script code for TradingVie...

Learn how to create an automated trading bot for MetaTrader 5 without coding. Step-by-step guide using AI tools to generate, test, and deplo...

Compare RSI and MACD indicators side-by-side. Learn when to use each momentum indicator, their strengths, weaknesses, and how to combine the...

Learn ICT (Inner Circle Trader) concepts including order flow, liquidity pools, market structure, order blocks, and fair value gaps. Complet...

Learn how to backtest a trading strategy properly. This step-by-step guide covers backtesting basics, common mistakes, key metrics to track,...

Master Fair Value Gaps (FVG) trading with this complete guide. Learn how to identify, trade, and combine FVGs with other concepts for high-p...

Discover the best Pine Script strategies for TradingView. From EMA crossovers to smart money concepts, learn proven strategies with code exa...

Learn how the ATR (Average True Range) indicator works and how to use it for stop losses, position sizing, and volatility analysis. Includes...

Learn how to swing trade mean reversion across stocks, forex, and crypto. Use moving averages, Bollinger Bands, and premium/discount concept...

Learn Smart Money Concepts (SMC) the right way. Understand market structure, liquidity grabs, fair value gaps, and order blocks—and see how ...

Learn how order blocks and liquidity zones actually work in Smart Money Concepts (SMC). Understand how institutions use liquidity, how to ma...

Learn practical mean reversion trading strategies that target the “fair value” of price. See how RSI, Bollinger Bands, and VWAP pullbacks wo...

Master market structure the way SMC traders see it. Learn HH/HL vs LH/LL, BOS vs CHOCH, and how to start visualizing structure in Pine Scrip...

See how to transition from classic retail trading concepts to Smart Money style thinking. Learn how support/resistance, indicators, and patt...

Understand fair value gaps (FVG) and imbalances in Smart Money Concepts. Learn how displacement creates FVGs, how to use them for entries, a...

Learn how to day trade using Smart Money Concepts (SMC). Understand intraday structure, liquidity hunts, kill zones, and how to frame EURUSD...

Learn how to combine Smart Money Concepts (SMC) with classic indicators like EMA, RSI, and volume. Use indicators as objective filters aroun...

Learn how to backtest Smart Money Concepts (SMC) like BOS/CHOCH, liquidity grabs, fair value gaps, and order blocks. See how to turn ICT-sty...

Learn the most common backtesting mistakes that ruin trading strategies. Discover how to avoid overfitting, look-ahead bias, survivorship bi...

Complete Pine Script tutorial for beginners. Learn how to code your first TradingView trading strategy from scratch with step-by-step instru...

Compare MQL5 and Pine Script for algorithmic trading. Learn the key differences between MetaTrader 5 and TradingView, which platform suits y...

Master trading risk management with proven strategies for position sizing, stop loss placement, and risk-reward ratios. Learn Kelly Criterio...

Master Bollinger Bands trading strategies for mean reversion, breakouts, and squeeze plays. Learn how to trade BB bounces, band walks, and v...

Complete guide to automating trading strategies on MetaTrader 5. Learn how to set up Expert Advisors, configure VPS hosting, run 24/7 automa...

Master trading backtesting metrics to evaluate strategy performance. Learn how to interpret win rate, profit factor, Sharpe ratio, maximum d...

Discover the most effective technical indicators for day trading stocks, forex, and crypto. Learn how to combine RSI, VWAP, EMA, and volume ...

Compare EMA vs SMA for trading with backtested results. Learn the differences between Exponential and Simple Moving Averages, when to use ea...

Learn how to build profitable trend-following trading strategies with complete code examples. Master Donchian Channels, moving average syste...

Get notified when we publish new trading guides and strategies

Use HorizonAI to convert your trading ideas into code instantly

Try HorizonAI Free